How Dall-E 2 and Other AI Art Generators Works?

Explained

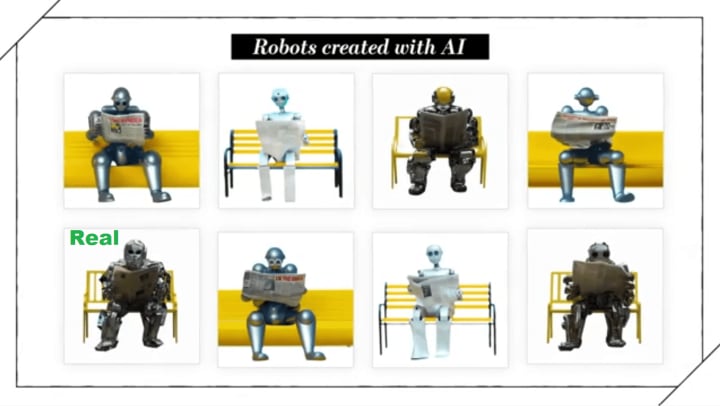

Once upon a time, a text to image AI art generator was tasked with creating a humanoid robot reading the Newspaper, sitting on a yellow bench. Actually, there were lots of different kinds of robots who liked to sit and read the paper. But only one of these robots is actually real. Well, a real human, under a real robot suit.

The rest of these robots were created by artificial intelligence using text-to-image generators. But how do they work? With new tools like OpenAI’s DALL E 2 and StabilityAI’s Dream Studio, you can type in a phrase and out comes an image. Nothing in these generated images you’re looking at right now.

Is copied and pasted from Google images or some photo agency. But how does the quality compare to real photos? We came up with a challenge to test the limits of AI photo generation. And to see if it can compete with humans. Or, in our case, a real photographer. And a real man named Peter Kokos. Oh, am I lined up? View In Audio AI

Everything, uh… With his real, elaborate, and handmade robot suit. Which takes an hour to put on.

What does this mean about telling real from fake on the internet? I’m gonna explain it all. With the help of… This guy. I, I don’t think he’s actually gonna be that much help. Let’s start with how this works. Both Dolly 2 and Dream Studio provide a simple text box. You say whatever comes to mind. Say, a photo of a robot in the forest with a balloon.

Then both systems take a few seconds to generate some pretty accurate and funny images. You might think, oh, the system is just looking at images of robots and balloons and putting them together. But, no. In the background, your text is sent to OpenAI or StabilityAI, where their powerful artificial intelligence has learned to make sense of that text and translate it into completely original images.

How did it learn what is what? By looking at billions of labeled images. Think of it like flashcards. The AI sees those all and starts to learn that many sci fi robots have eyes, and boxy heads, and a square stomach. Same with balloons. It learned that most balloons are this shape, and have strings on them, and it even has this little tie thing.

Through deep learning, it not only knows that that’s a robot, and that’s a balloon, but it knows the relationship between two distinct objects. So it automatically puts the balloon in the robot’s hand. Just like I knew to put the balloon in his hand on this shoot. Or at least Dolly knew to put the balloon in a hand.

Dream Studio was pretty confused by the whole robot balloon thing. Creating basic images is just the start of what you can do with these tools. If I wanted to take a Polaroid of this real robot sitting by the pool, drinking a beer. Smile! I need to get an instant camera. Perfect. And a beer. Wall Street Journal and a beer. Written by Audio AI

About the Creator

Audio AI

Generate Human Like Text To Speech

Text To Music

Text To Natural Sound Effects

Ready To Use API

Enjoyed the story? Support the Creator.

Subscribe for free to receive all their stories in your feed. You could also pledge your support or give them a one-off tip, letting them know you appreciate their work.

Comments

There are no comments for this story

Be the first to respond and start the conversation.